Partial Differential Equations

Surrogate physics models powered by diffusion-based generative learning across forward and inverse problems.

Score-based diffusion models offer a unified, probabilistic framework for solving forward and inverse problems in scientific computing. Our team demonstrates their effectiveness for robust uncertainty quantification (UQ) in two key applications: field reconstruction and large-scale weather forecasting. The benefits of utilizing generative models for scientific computing can be summarized as follow:

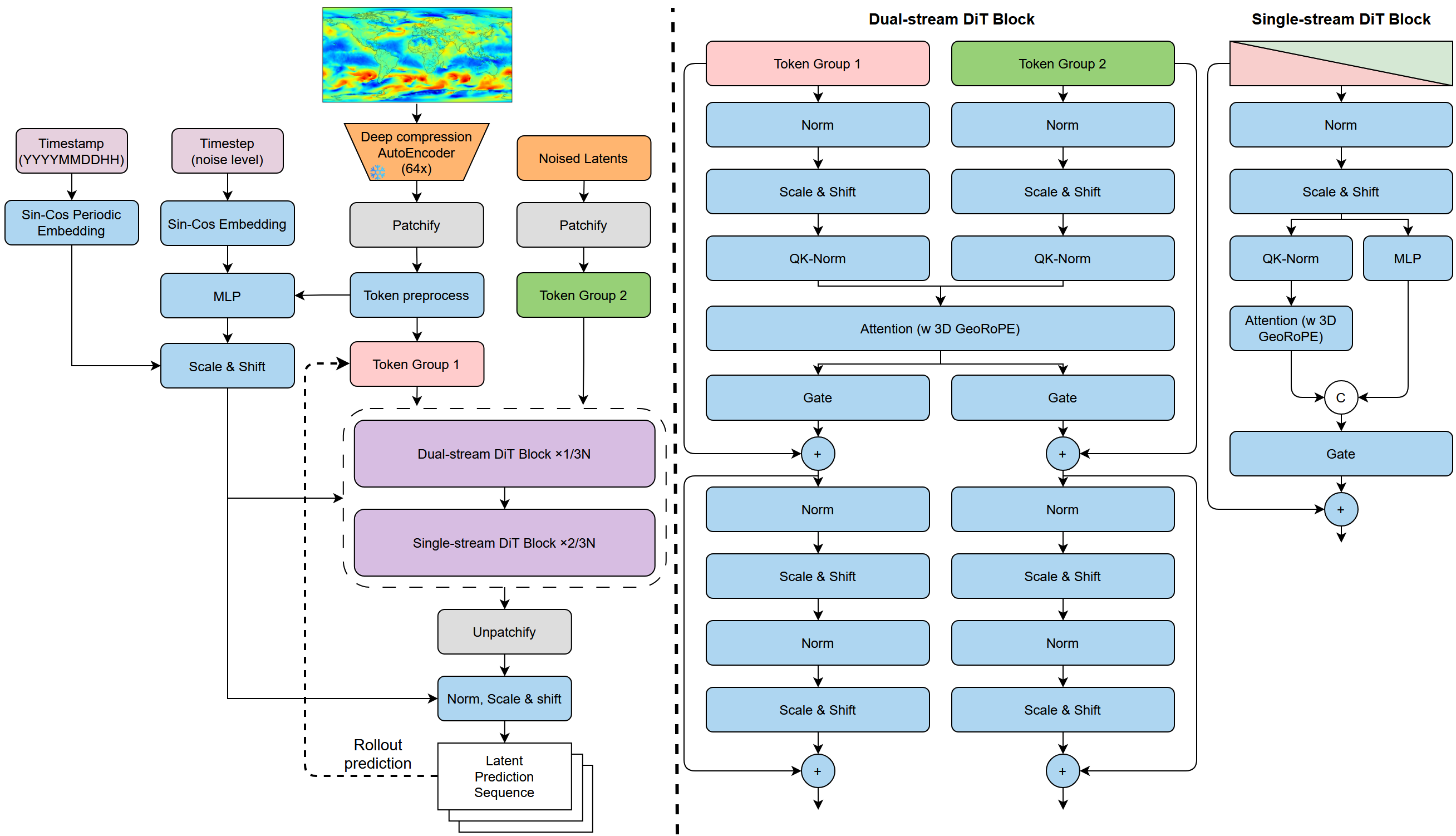

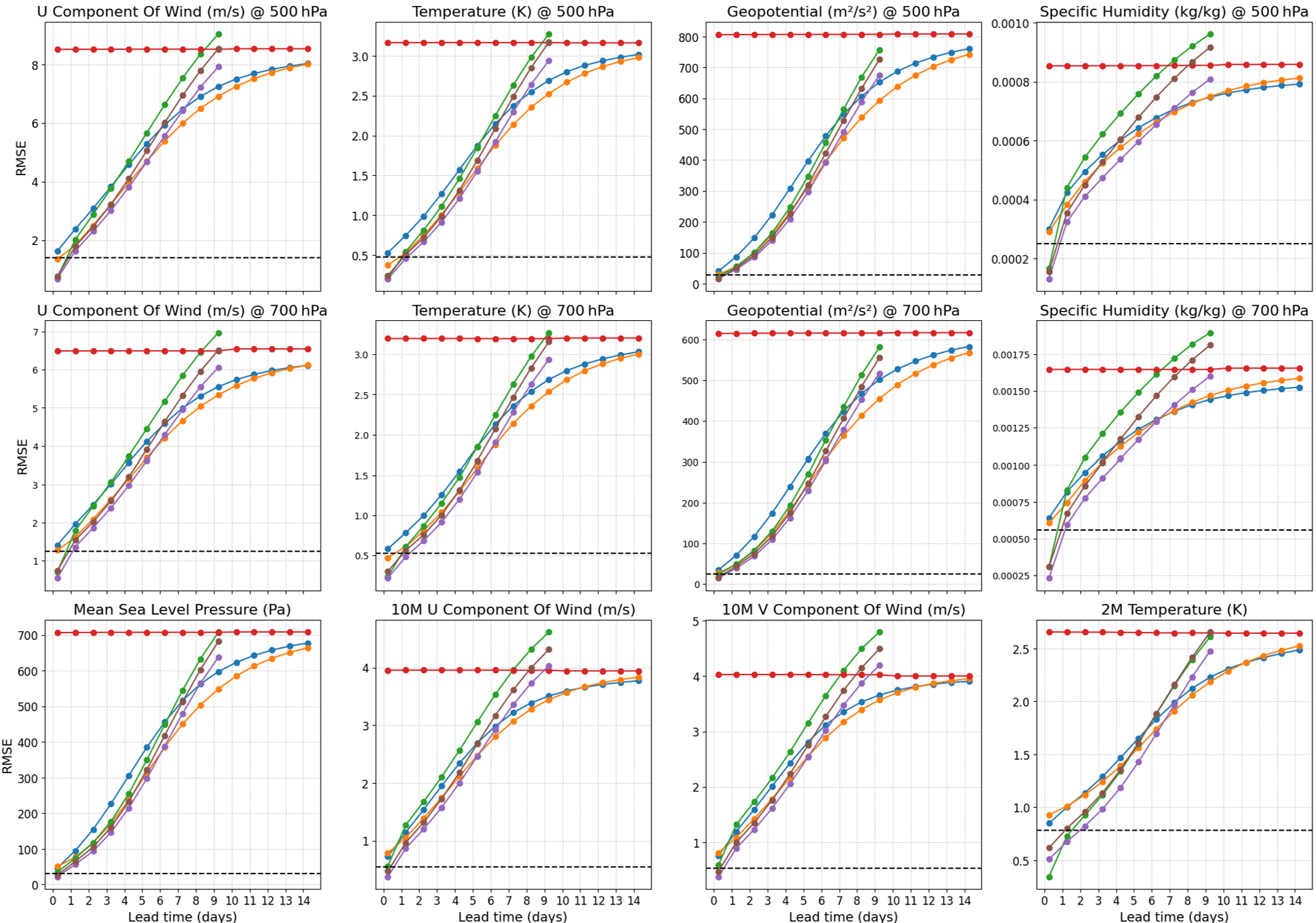

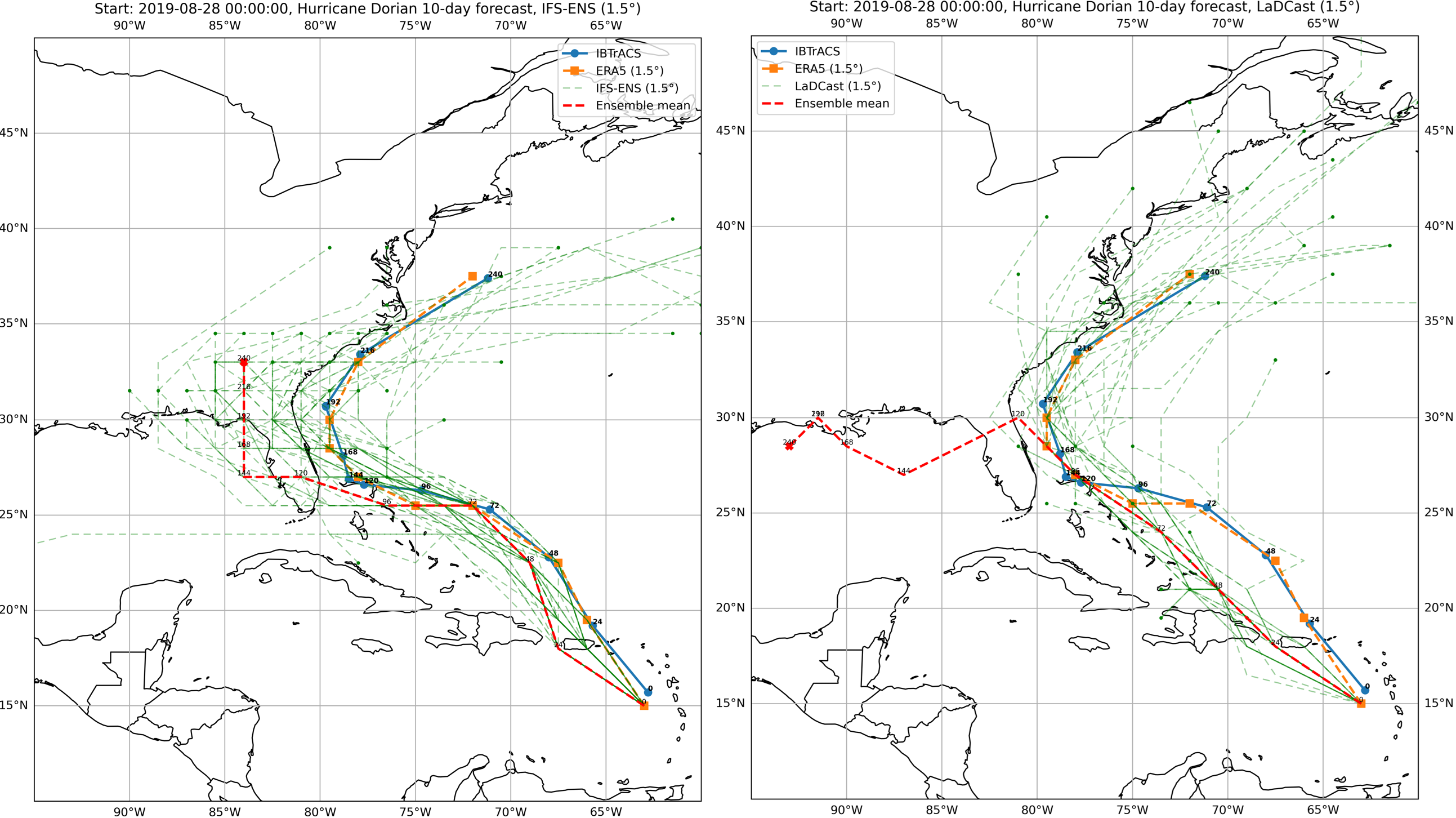

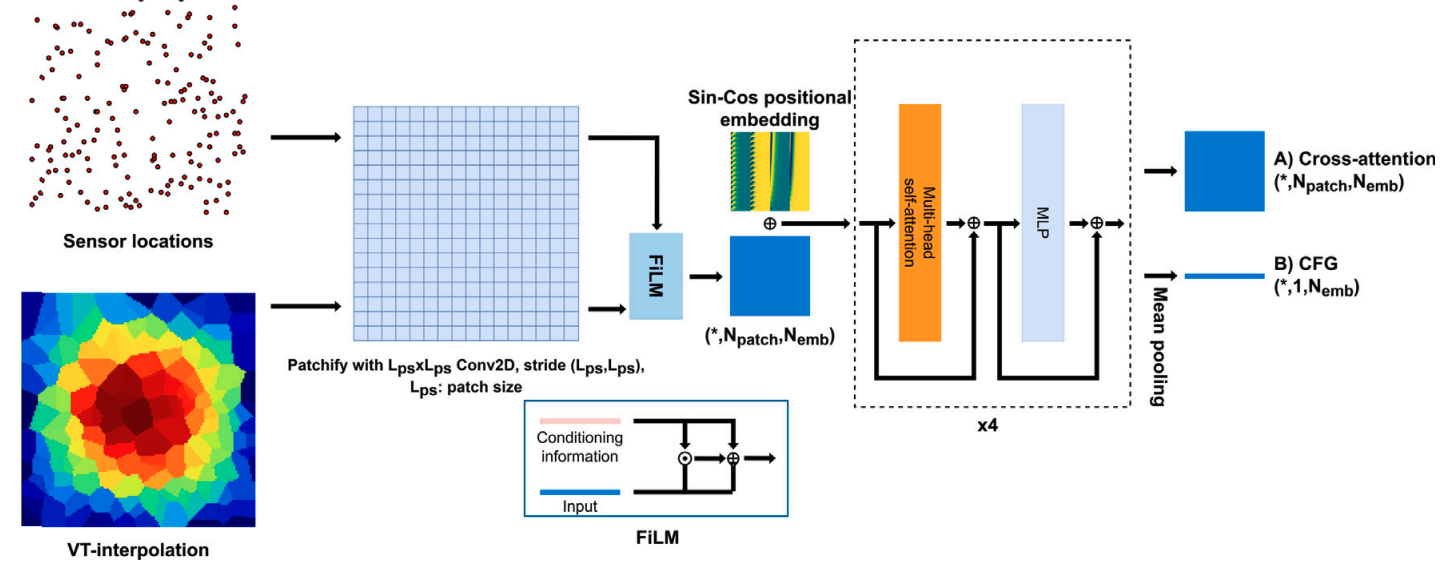

For forward problems, we introduce LaDCast, the first latent diffusion model for global weather forecasting. Trained on the ERA5 reanalysis dataset (1979–2017, 121×240 grid), LaDCast employs a deep compression autoencoder (DC-AE) with modified CNN kernels to efficiently encode large-scale atmospheric data. The model achieves performance comparable to the state-of-the-art probabilistic numerical weather prediction system (IFS-ENS) without requiring perturbed initial conditions and demonstrates superior skill in precipitation prediction.

A 15-day forecast can be generated in under one minute on a single H100 GPU, with multiple ensemble members produced in parallel. This enables computationally efficient, scalable, and low-bias uncertainty quantification for autoregressive predictions.

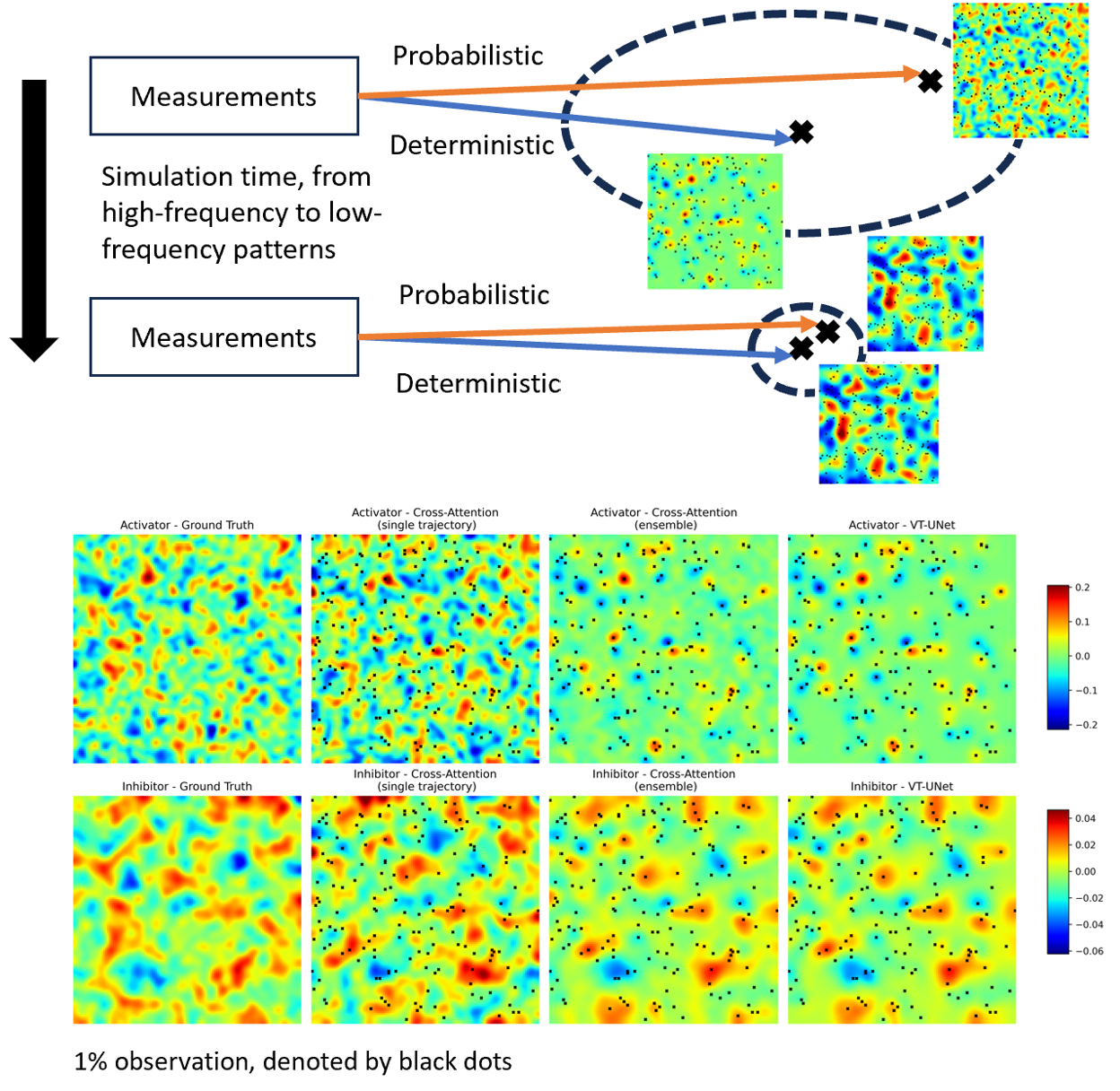

The model reconstructs global fields from sparse data by generating an ensemble of plausible realizations. Our work presents a diffusion-based framework for reconstructing spatial and spatio-temporal fields from sparse and noisy sensor observations. Unlike traditional deterministic reconstruction methods, this probabilistic formulation enables the model to capture the underlying uncertainty and variability inherent in sparsely observed systems.

Built upon a UNet backbone with cross-attention, the model effectively integrates spatial context from sparse measurements to infer full-field structures. This design allows the network to represent multiple plausible realizations rather than memorizing specific patterns, providing robustness against noise and incomplete observations.